Difference between revisions of "Almeida-Pineda recurrent backpropagation"

Hawaiisunfun (Talk | contribs) |

Hawaiisunfun (Talk | contribs) |

||

| Line 8: | Line 8: | ||

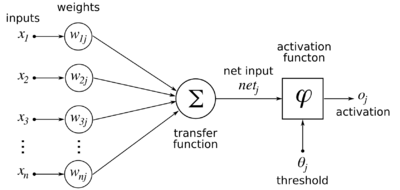

[[File:ArtificialNeuronModel english.png|thumb|right|400px|Model of a neuron. <i>j</i> is the index of the neuron when there is more than one neuron. The activation function for backpropagation is sigmoidal. [https://www.researchgate.net/figure/A-back-propagation-neural-network-with-the-sigmoid-function-used-as-activation-function_fig5_44858457 Source]]] | [[File:ArtificialNeuronModel english.png|thumb|right|400px|Model of a neuron. <i>j</i> is the index of the neuron when there is more than one neuron. The activation function for backpropagation is sigmoidal. [https://www.researchgate.net/figure/A-back-propagation-neural-network-with-the-sigmoid-function-used-as-activation-function_fig5_44858457 Source]]] | ||

| − | [[File:Artificial_neural_network.svg|thumb|right|250px|A feedforward network. In the Almeida-Pineda model, connections may go from any neuron to any neuron, backwards or forwards.]] | + | [[File:Artificial_neural_network.svg|thumb|right|250px|A feedforward network. In the Almeida-Pineda model, connections may go from any neuron to any neuron, backwards or forwards. [https://slideplayer.com/slide/13853243/ Source]]] |

Revision as of 02:30, 20 July 2019

Almeida-Pineda recurrent backpropagation is an error-driven learning technique developed in 1987 by Luis B. Almeida[1] and Fernando J. Pineda.[2][3] It is a supervised learning technique, meaning that the desired outputs are known beforehand, and the task of the network is to learn to generate the desired outputs from the inputs.

As opposed to a feedforward network, a recurrent network is allowed to have connections from any neuron to any neuron in any direction.

Contents

Model

Given a set of k-dimensional inputs with values between 0 and 1 represented as a column vector:

and a nonlinear neuron with (initially random, uniformly distributed between -1 and 1) synaptic weights from the inputs:

then the output y of the neuron is defined as follows:

The weights are then updated according to the following equation:

where η is some small learning rate.

Derivation

The error termsObjections

While mathematically sound, the Almeida-Pineda model is biologically implausible, like feedforward backpropagation, because the model requires that neurons communicate error terms backwards through connections for weight updates.

References

- ↑ Almeida, Luis B. (June 1987). "A learning rule for asynchronous perceptrons with feedback in a combinatorial environment." Proceedings of the IEEE First International Conference on Neural Networks

- ↑ "Generalization of backpropagation to recurrent neural networks". In Anderson, Dana Z. Neural Information Processing Systems Springer (1988). pp. 602-611. ISBN 978-0883185698}}

- ↑ Pineda, Fernando J. (1989). "Recurrent backpropagation and the dynamical approach to adaptive neural computation". Neural Computation 1: 161-172

- ↑ Hopfield, J. J. (May 1984). "Neurons with graded response have collective computational properties like those of two-state neurons". Proceedings of the National Academy of Sciences of the United States of America 81: 3088-3092

- ↑ "Deterministic Boltzmann learning in networks with asymmetric connectivity". In Touretzky, D. S.;Elman, J. L.; Sejnowski, T. J.; Hinton G. E. Connectionist Models: Proceedings of the 1990 Summer School Morgan Kaufmann Publishers (1991). pp. 3-9. ISBN 978-1558601567